Artificial intelligence is expected to evolve through the continuous influx of data from the Internet of Things (IoT). If generative AI represents the brain, IoT serves as the nervous system, relaying sensory data to drive learning and decision-making. As of last year, there were roughly two connected IoT devices for every person on the planet. That number is projected to grow by approximately 2.5 times over the next 15 years. These devices generate a wide range of data: telemetry from industrial equipment, environmental metrics from agricultural fields, data from connected vehicles, video from surveillance cameras, and vibration data, such as frequency, amplitude, and phase, from sensors installed on high-value machinery. This diverse data ecosystem supports multimodal learning, a term that refers to a form of information processing that humans have long employed.

What's new is the accelerating pace of innovation in using IoT data to enhance AI systems across several critical industries. For example, pharmaceutical companies can now manufacture drugs more safely and effectively. In manufacturing, plants are leveraging IoT-driven AI to improve product quality, reduce costs, and increase throughput and operational efficiency. Even at the consumer level, smart home technologies are helping individuals enhance security and overall lifestyle convenience.

Benefits of Generative AI with IoT Data

What should be expected from a system that fully leverages IoT and generative AI?

Multimodal data fusion: A key capability is the integration of diverse data types to provide richer, more actionable insights. For example, the task of tracking a shipping container across the ocean. In this scenario, satellite-based location and communication systems can monitor the container’s position. Internal sensors measure temperature, humidity, and pressure to ensure the integrity of the goods being transported. This level of detail enables real-time quality assurance across global supply chains.

Multifaceted root cause analysis: As systems grow in complexity, so does root cause analysis. When diagnosing issues, such as troubleshooting an internet router, a support agent can remotely access telemetry data streaming from the device, analyze it, and guide the customer through the fix. This capability can eliminate the need for expensive in-person service calls.

Dynamic query handling: Imagine a technician working on a complex assembly line or in the field under hazardous conditions, such as repairing electrical equipment on top of a tower. With a context-aware AI assistant trained on the full spectrum of equipment failure modes, the technician can ask questions in natural language and receive immediate, accurate responses.

Explainable alerts and insights: While common error codes, such as "404," are widely understood, many system messages remain cryptic and unhelpful. Advanced AI systems can translate these error codes into plain language, explaining what went wrong and suggesting steps to resolve the issue.

Challenges in Delivering Intelligent Applications

These are the key challenges in building an effective generative AI system integrated with IoT data.

IoT generates an enormous volume of data, streaming in real time from countless devices. A robust system must be able to ingest, parse, filter, and identify useful signals from that data efficiently and at scale. The ability to separate valuable insights from noise is crucial for making informed real-time decisions and implementing automation.

Another core challenge lies in the system’s capacity for conceptual connection. Humans naturally excel at linking unrelated ideas. Replicating this capability in machines is complex, but progress in this area will allow AI systems to draw connections across multiple domains, enabling them to solve problems through comparison, abstraction, and contextual understanding.

Infrastructure is also a critical consideration. Generative AI workloads demand massive compute power and scalable architectures.

Data privacy and security must be foundational. Handling sensitive data, particularly from connected devices in homes, industries, and healthcare settings, requires robust safeguards. A well-architected system should embed privacy and security best practices from the ground up.

Services

AWS offers a deeply integrated set of services designed to support the development of intelligent, IoT-connected generative AI systems. These capabilities span from edge to cloud, enabling organizations to build scalable, secure, and data-driven solutions. At the edge, AWS provides device runtimes and operating systems that allow the creation of cost-effective, intelligent sensors. For large-scale device environments, AWS offers robust data ingestion engines that can manage fleets of millions of devices. These systems support remote updates, restarts, application lifecycle management, and end-to-end security. In the cloud, AWS provides a comprehensive suite of services to process, store, and integrate data streams. These services enable real-time data fusion, combining telemetry from diverse sources to generate deeper insights. On the AI and analytics side, AWS offers powerful tools for model training and inference. Amazon SageMaker provides a fully managed environment for building, training, and deploying machine learning models, and Amazon Bedrock offers access to a range of LLMs. AWS also delivers advanced business intelligence tools that help translate massive volumes of IoT and AI-generated data into actionable insights about the physical world.

Emerging Patterns for Intelligent Applications

There are three primary design patterns for integrating AI with IoT. The first is analysis, which utilizes AI to perform more complex analyses than were previously possible. The second pattern is assistants. This involves building AI-powered assistants, similar to highly capable chatbots that help users solve complex problems more quickly and accurately. The third is autonomy. This pattern focuses on building systems that can make decisions independently, driven by real-time data from the physical world.

Analysis

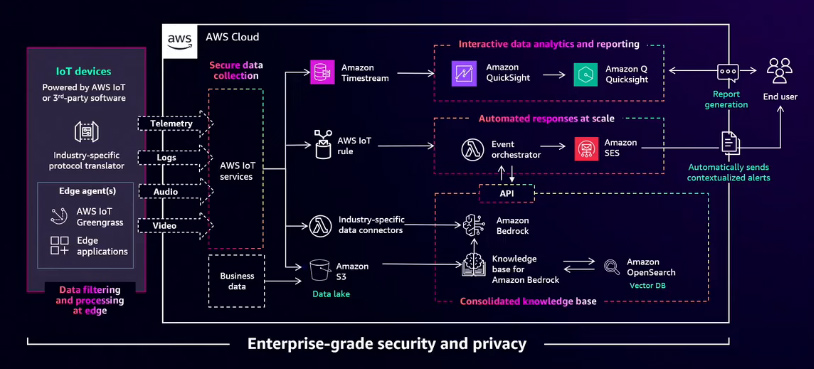

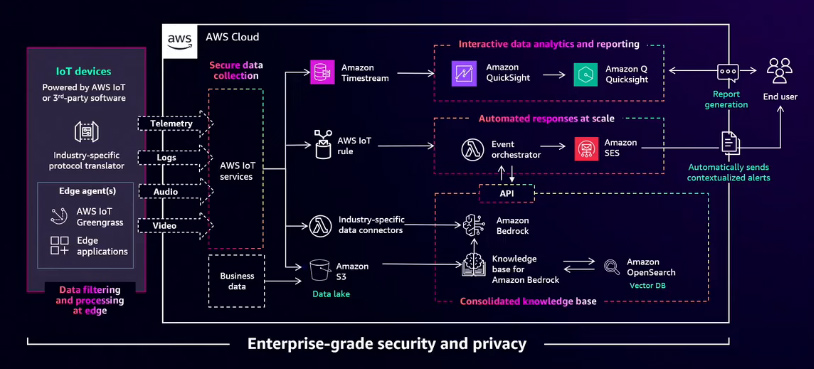

Automated Data Analysis and Alerting

Imagine you are responsible for managing a manufacturing floor, whether producing MRI machines, multifunction printers, or other complex products. Today, many tools are available to help monitor and improve the efficiency of such operations. Data can be collected from equipment that has been in service for decades, cleaned, and standardized into a model that can be analyzed using modern tools. Subject matter experts then review this data to generate reports, allowing you to assess the health of machines, predict potential failures, and plan maintenance activities accordingly.

However, this traditional reporting process comes with limitations. Reports are often tied to specific subject matter experts and may exist in isolated silos. They also take time to produce, typically generated at the end of the day, meaning any issues detected earlier may go unaddressed until the shift is over. This is where AI significantly enhances the process. AI systems, trained on massive datasets and supported by powerful computing resources, enable real-time reporting and alerting. Instead of waiting until the end of the day, issues can be detected and flagged as they occur, allowing teams to take immediate action. Rather than receiving isolated reports for each piece of equipment or production line, AI-driven analysis provides integrated, holistic insights.

Assistants

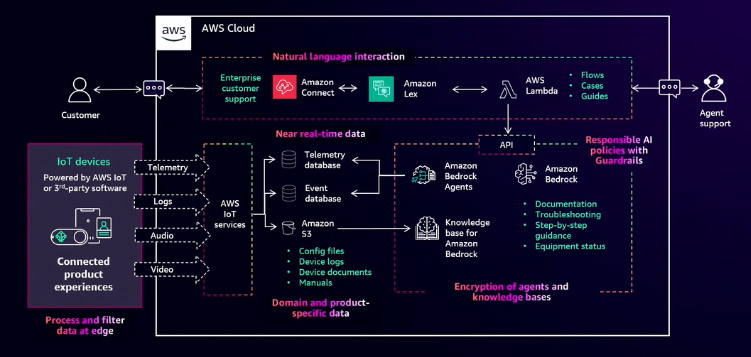

Improve Connected Product Experience

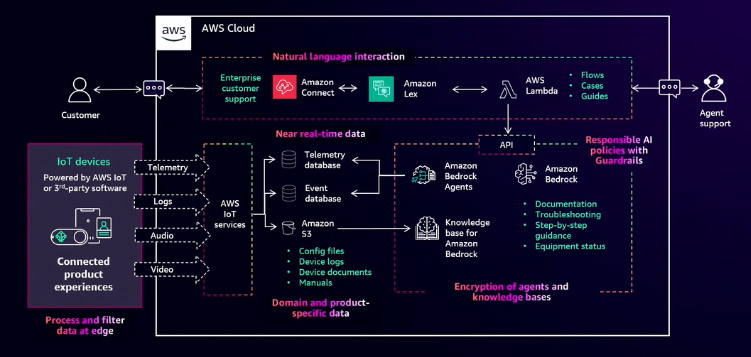

Imagine purchasing a smart home device, like a robot vacuum, online at a great price. You unbox it and set it up, but just a few hours later, the device stops working. That moment of convenience quickly turns into frustration. A product designed to save time is now causing disruption. But what if that issue could be resolved instantly or even prevented altogether?

On the left side of the diagram are connected products that capture telemetry and diagnostic data using the AWS IoT suite. This data can be processed at the edge and then streamed into the cloud to the most appropriate AWS data store. Once the data is in AWS, it becomes accessible to Amazon Bedrock and its suite of generative AI tools. Using Bedrock agents and agentic workflows, diagnostic checks can be performed in real time with the chat agent that interacts with the customer. When combined with a knowledge base of configuration files, device documentation, and system logs, the root cause of an issue can be identified. This enables the delivery of a personalized, step-by-step solution back to the customer, resolving the problem quickly. The result is a significantly improved customer experience. At the same time, customers expect this convenience without compromising privacy or security. Amazon Bedrock provides a comprehensive set of features to support responsible AI use and data protection. This includes built-in encryption and governance controls. With Amazon Bedrock Guardrails, customers can define content policies and enforce behavior boundaries, ensuring a positive user experience.

AWS IoT SiteWise Assistant

Imagine this scenario in an industrial setting, where unplanned downtime can have serious financial consequences, potentially costing hundreds of thousands of dollars per hour. In such high-stakes environments, operators must react quickly to unexpected conditions, which requires immediate access to both specialized knowledge and real-time operational data. AWS IoT SiteWise Assistant, a generative AI feature integrated into AWS IoT SiteWise, addresses this need by delivering concise, natural language summaries of operational data and alarms. This enables operators to understand what is happening on the plant floor, make informed decisions more quickly, and resolve issues more efficiently.

Autonomy

The International Federation of Robotics recently reported a record 4.2 million robots operating in factories worldwide. However, robotics is no longer limited to the factory floor. Today, robots are increasingly integrated into everyday environments, where they may wash dishes in a restaurant or deliver room service in a hotel.

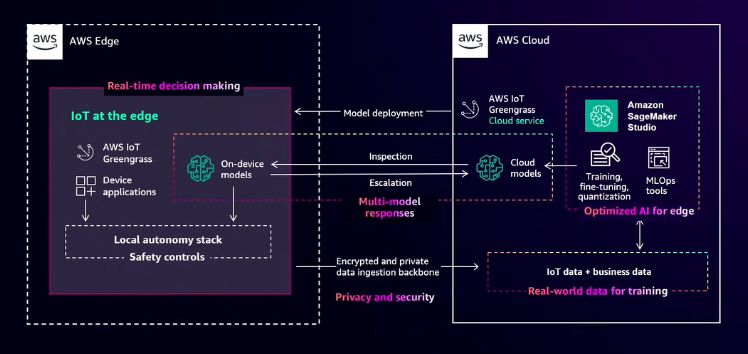

Autonomy with Edge AI and IoT Data

To understand the potential of combining IoT data with AI at the edge for autonomy, it's essential to identify the role IoT plays in enabling intelligent systems. Autonomous systems rely on three key capabilities: the ability to sense, compute, and act. Sensors deliver real-time, multimodal IoT data, including inputs from computer vision, motion detectors, temperature sensors, and other devices. This information is required for edge-based AI applications to perceive and interact with their environment. However, these systems generate vast volumes of data. For example, a single self-driving car can produce between 10 and 100 terabytes of data each day. To support real-time decision-making, this data must be processed immediately, which requires robust edge computing capabilities. Recent advancements in AI-accelerated edge devices have enabled the direct execution of complex AI inference on the device. Local data processing is essential for applications where every millisecond counts, such as collaborative robots working alongside humans or autonomous vehicles navigating busy city streets. When connected, these intelligent edge applications can combine low-latency, on-device inference for immediate decisions with cloud-based inference for more complex analysis that benefits from broader systems-level context.

Intelligence at the Edge

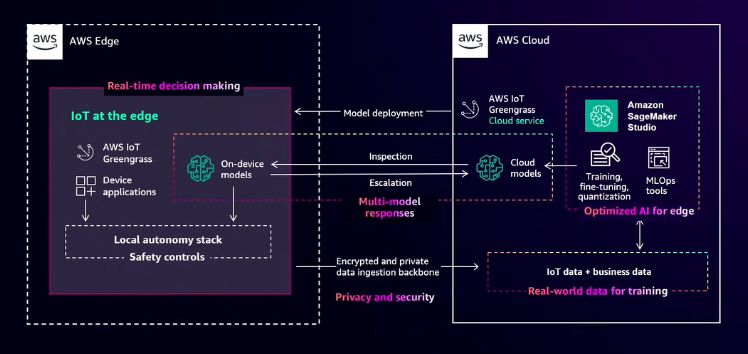

This example illustrates how intelligence at the edge enables real-time decision-making. Imagine a robot food delivery service en route to deliver a pizza order. To complete the delivery, the robot must avoid hazards like fountains or drainage ditches and ensure the pizza arrives hot. To do this, it must make obstacle and hazard avoidance decisions in real time, often under unpredictable or disrupted network conditions.

On the left side of this architecture, the delivery robot uses AWS IoT Greengrass edge runtime to deploy and manage local machine learning models. These models, such as those used for computer vision, enable the robot to make real-time decisions and interact with a local autonomy stack. To improve these models over time, the robot collects real-world data, including video and images, from obstacles, edge cases, and failure conditions it encounters. This data is preprocessed at the edge and securely streamed back to AWS using Greengrass edge components and secure credentials. Once in the cloud, this data can be combined with business intelligence to support further training, fine-tuning, and optimization of the models. Amazon SageMaker offers hundreds of pre-trained models and a full suite of machine learning tools to build MLOps pipelines and deploy models back to edge devices. With a well-trained model, the robot can avoid most hazards. However, in unexpected edge cases or failure conditions, foundation models in the cloud provide an escalation path, analyzing issues across the fleet and enabling improvements beyond a single robot or incident. This not only helps the robot adapt to new scenarios but also empowers human operators to respond more quickly.

Reference:

AWS Events